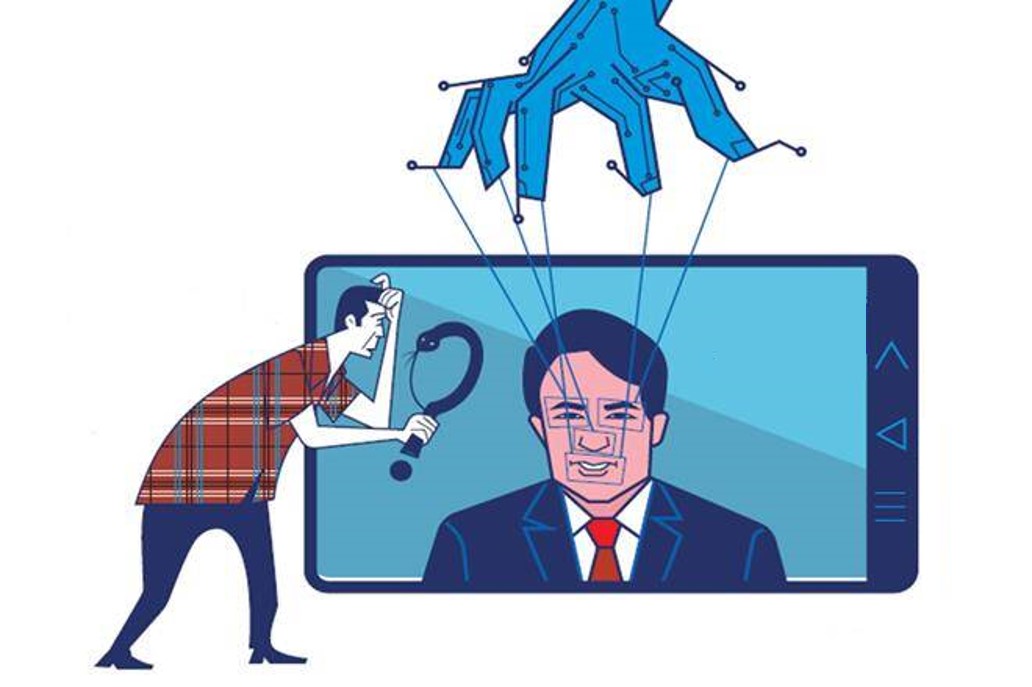

To accurately analyze the danger of synthetic media, it is worth comparing it with other methods of today, commonly used in crimes targeting companies. Predicting how synthetic media will develop and strengthen financial crime schemes that have caused billions of dollars in losses to date is important for assessing the level of threat.

Directly comparing synthetic media and its most powerful weapon, deepfake, with more widely used tools allows us to better understand possible threat scenarios that can greatly empower cyber attackers and necessarily require new measures against them. In the same way, it also helps identify scenarios that are no more dangerous than today’s threats and do not require additional precautions.

With widespread financial crimes targeting companies, let’s briefly consider the potential contribution of synthetic media and deepfake to these crimes…

Payment fraud reaches half the damage caused by cybercrime

Tricking firms into launching fraudulent payments led to companies stealing more than $ 1.7 billion from fraudsters in the US in 2019, according to the Federal Bureau of Investigation (FBI). That’s almost half of the total reported loss from all cybercrime. Criminals often hack into an email account of a senior company executive, such as the chief executive (CEO), and then contact a finance officer to make an urgent bank transfer request. Criminals can also disguise themselves as a supplier or employee of the company with fake invoices.

Deepfakes can make phone calls on the subject more realistic in companies where the corporate email confirmation system is implemented. In fact, a convincing deepfake call can even eliminate the need for email hacking or fraud in some cases. Those who have not developed awareness may find deepfake video calls more convincing than voice calls.

The use of deepfakes to commit fraud has already been documented on a small scale. In 2019, criminals cloned the voice of a German CEO, successfully tricking a British company employee into sending a bank transfer of $ 243k. A more ambitious plan could add another layer of persuasion by involving deepfakes in live video interviews. Current technology allows an offender to instantly replace one face with another during a video call. Due to the fact that video calls often have poor image quality, it can make the flaws in the deepfake go unnoticed or overlooked.

Stock manipulation through disinformation campaigns

The Internet offers many ways for disinformation campaigns to manipulate stock prices. Unidentified attackers often make false or misleading claims about a targeted stock through blogs, forums, social media, bot networks, or spam. These campaigns aim to artificially increase (a “pump and unload” plan) or lower (a “Shorten and cash” plan) the stock price in order to generate quick profits. Because small company stocks can be more easily manipulated, small companies has been the most common target of cyber attackers. However, large corporations can also be victims of complex disinformation campaigns, which can sometimes have both political and financial motives.

Deepfakes can lower a company’s stock price by producing seemingly believable false content, perhaps fabricating specific statements by a company leader. For example, a cyber attacker could post a deepfake video in which a targeted CEO admits to his company’s bankruptcy, admits to misconduct, or makes highly offensive comments. Alternatively, deepfakes can also be designed to raise a company’s stock price by making up positive events. For example, the company’s deepfake videos could be produced that depict celebrities supporting or using a product in a fake form.

A well-crafted deepfake shared through social media or spam networks can be effective in manipulating small-volume stocks. Smaller companies often lack the resources and knowledge to build a quick, persuasive self-defense against short and distorted plans. Even if a deepfake is quickly debunked, perpetrators can still profit from short-term trades.

Although Deepfake doesn’t make it believe, its trail remains

Deepfakes may also represent a new vulnerability for large companies whose stock prices have traditionally been more resistant to manipulation. Highly visible company leaders produce large volumes of media interviews, earnings calls, and other public records. These make it easier for attackers to produce deepfakes.

A particularly damaging scenario would require the production of specific statements. For example, a synthesized record of a CEO allegedly using sexist language is required. It may be impossible to prove definitively that a private conversation never took place; a CEO, especially with prior credibility issues, faces a much more challenging situation. It could start to mix with fact fiction and affect the market even more.

Even if a credible deepfake is refuted, it will likely have long-term negative consequences for a company’s reputation. As with other forms of misinformation, deepfake information can leave lasting psychological impressions on some viewers even after being refuted. Experiments have shown that a significant minority will believe that a deep fake is real, despite clear warnings that it is fake. A long-term loss of confidence can drive down revenue and stock prices over time, especially for companies facing the consumer.

Stock manipulation of bots with artificial public opinion on social media

Stock prices can also be manipulated by creating false reflections of mass sentiment. For example, showing a fake social reaction to a brand on social media can lead to a magnetic pull, which can lead to the spread of unreal negative opinion and become reality over time. Social media bots are already used for this purpose. Attackers create a large number of fake identities on a platform and then coordinate mass exchanges that promote or defame specific companies. Although social media platforms use many methodologies, including machine learning, to identify and remove attempts that violate their policies against spam and fake profiles, this is extremely difficult and laborious.

While no cases have yet been publicly documented in this field, deep learning can theoretically be used to create artificial intelligence-driven synthetic social bot networks that better evade detection and improve persuasion. The attackers have already started using AI-generated profile photos that depict people who don’t exist, leading to a situation that hinders efforts to determine the re-use of the picture. It is known that several complex influence campaigns conducted by some media companies with dark intentions and suspicious intelligence elements have used this technique. The next step could be for algorithms to write artificial posts.

Synthetic bots are smarter and more believable than traditional bots

Traditional bots create duplicate or random posts, while synthetic social bots have the ability to post new, personalized content. Aware of their previous posts, they are able to maintain consistent personalities, writing styles, subject interests and biographies over time. The most convincing synthetic bots will gain organic human follow-up, increasing the impact of their messages and making them difficult to detect.

Each bot uses unique language and storytelling consistent with its personality. It may seem to represent campaign-wide consumer sentiment, and therefore may affect the stock price. For example, bots can all claim to have contracted food-borne diseases at the same fast food chain.

Danger grows as stock exchange digitizes

Synthetic social bots for stock manipulators can abuse investors’ desire to analyze social media activity for trends in consumer sentiment. A growing number of fintech companies are marketing “social sensitivity” tools that analyze what social media users say about companies. In some cases, social sensitivity data was integrated with automated trading algorithms, enabling computers to conduct stock trades without human intervention based on apparent trends in social media activity. The more widespread use of social sensitivity analysis and its greater integration with automated trading algorithms will increase the power of synthetic social bots to manipulate stock prices.

It is important to note that all predictable scenarios can be implemented at an unexpected moment, although at this point it has largely not yet been realized. Because, although alternative technologies are also available for today’s financial crimes, they are mostly registered or commercially controlled. This, in turn, provides an opportunity to control their proliferation and restrict future abuse. Synthetic media technologies projected in scenarios targeting companies are widely, easily and even mostly available for free.

Financial institutions and the financial system, how synthetic media and deepfake are under threat, are the subject of the next post. While an entire financial system is still so vulnerable to a massive deepfake attack, the danger of synthetic media facing companies such as individuals is not at all human.